Most e-commerce support teams only review about 2-5% of their customer calls. The other 95%? A complete black box.

You have no idea if your agents are giving accurate shipping estimates, following your return policy, or telling customers the wrong thing about a product. And when a bad call leads to a 1-star review or a chargeback, you only find out after the damage is done.

That's what a quality assurance program fixes. It gives you a structured way to monitor calls, coach your agents, and catch problems before they cost you customers. This guide walks through everything you need to build a QA program that actually works for e-commerce customer service, whether you're a two-person support team or running a full call center.

Hear what AI support calls sound like for your store. Just paste your Shopify URL and get sample calls in under 20 seconds, no email required. Listen to demo calls for my store.

What is call center quality assurance?

Call center quality assurance is the process of monitoring, evaluating, and improving customer interactions across your support channels. It covers phone calls, live chat, email, and any other way customers reach you.

Here's how it works in practice. Someone on your team (or a dedicated QA analyst) listens to recorded calls, scores them against a set of criteria, and then uses those scores to coach agents on what to improve.

QA is different from quality control (QC). QC is reactive: you catch mistakes after they happen. QA is proactive: you build processes that prevent mistakes from happening in the first place.

For e-commerce teams, QA matters because your support calls are highly measurable. Did the agent look up the order correctly? Did they follow the return policy? Did they resolve the issue on the first call? These aren't subjective judgment calls. They're concrete outcomes you can track.

According to McKinsey research, automated QA scoring hits accuracy levels above 90%, while manual scoring typically tops out around 70-80%. That gap matters when you're making decisions about agent performance based on those scores.

Why call center quality assurance matters for e-commerce

Here's the business case in one sentence: bad customer experiences put roughly 9.5% of your revenue at risk, according to Qualtrics research.

For a Shopify store doing $500K a year, that's nearly $50,000 on the line. And it doesn't take a catastrophic failure to lose that revenue. It's the slow bleed of agents who don't know your return policy, can't find order details, or put customers on hold for five minutes to ask a colleague.

On the flip side, customers who have a positive support experience are 3.5x more likely to make another purchase. That's the difference between a one-time buyer and a loyal customer who comes back for years.

Quality assurance helps you in three specific ways:

- Consistency: every caller gets the same accurate information about your products, shipping, and policies

- Cost reduction: catching training gaps early means fewer refunds, chargebacks, and escalations

- Agent development: QA data shows you exactly where each agent needs coaching, instead of guessing

If you want to see how customer service KPIs connect to revenue, that's worth a separate read. But the short version: QA is the system that makes those KPIs move in the right direction.

Key metrics for your QA program

You can't improve what you don't measure. Here are the metrics that matter most for e-commerce quality assurance, based on what we've seen work across hundreds of support teams.

| Metric | What it measures | Target benchmark | How to track |

|---|---|---|---|

| First call resolution (FCR) | Issues resolved on first contact | 70-75% | CRM tagging, post-call survey |

| Average handle time (AHT) | Call duration from pickup to wrap-up | 4-6 minutes (e-commerce) | Phone system reporting |

| Customer satisfaction (CSAT) | Post-call satisfaction rating | 85%+ | Post-call survey (1-5 scale) |

| Quality score | Composite scorecard rating | 80%+ average | QA scorecard evaluations |

| Compliance rate | Adherence to scripts and procedures | 90%+ | QA evaluations |

| Transfer rate | Calls requiring escalation | Under 15% | Phone system reporting |

A few things to keep in mind about these metrics.

First call resolution is the most important metric for e-commerce support. When a customer calls about a missing order and you resolve it on that first call, the customer walks away happy. When they have to call back twice, satisfaction drops off a cliff.

AHT is tricky. Shorter isn't always better. If agents rush through calls to hit a time target, they miss details and create callbacks. The goal is efficiency, not speed.

CSAT and quality scores should move together. If your quality scores are high but CSAT is low, your scorecard is measuring the wrong things. If you want to go deeper on these metrics, check out our guide to call center statistics for more benchmarks.

How to build a call center QA program in 7 steps

Building a QA program doesn't require a huge budget or a dedicated team. Even a two-person e-commerce support operation can run effective quality assurance. Here's how.

1. Define your quality standards

Before you grade a single call, get clear on what "good" looks like for your store. This means writing down the specific behaviors and outcomes you expect from every support interaction.

For an e-commerce team, your standards should cover:

- Order handling: agent verifies order number, checks status accurately, communicates timeline clearly

- Product knowledge: agent can answer questions about ingredients, sizing, materials, or whatever your products require

- Policy compliance: agent follows your return, refund, and exchange policies without making exceptions they shouldn't

- Communication: agent is friendly, doesn't use jargon, confirms next steps before hanging up

Tie these standards to your customer service best practices and business goals. If your top priority is reducing refund rates, weight your scorecard toward resolution quality.

2. Create your QA scorecard

Your scorecard is the document evaluators use to grade each call. Keep it to 15-20 criteria max (evaluator fatigue is real), and weight each criterion by how much it matters to your business.

Here's an e-commerce scorecard template you can use right away:

| Category | Criteria | Weight | Score (1-5) |

|---|---|---|---|

| Opening (10%) | Professional greeting, verified caller identity | 10% | |

| Order handling (15%) | Looked up order correctly, verified details | 15% | |

| Problem resolution (30%) | Identified issue, offered correct solution, resolved on first call | 30% | |

| Product knowledge (15%) | Accurate info about products, shipping, policies | 15% | |

| Communication (15%) | Clear, empathetic, no jargon, confirmed understanding | 15% | |

| Compliance (10%) | Followed scripts, used correct tools and procedures | 10% | |

| Closing (5%) | Recap of resolution, asked if anything else, professional close | 5% |

Notice that problem resolution gets 30% of the weight. In e-commerce, the customer called because something went wrong (missing order, wrong item, damaged product). Solving that problem is the entire point of the call.

3. Set up your monitoring process

How many calls should you review? Industry best practice from Scorebuddy research says 5-10 evaluations per agent per month. For small teams, start with 5.

You've got three monitoring approaches:

- Random sampling: pull calls at random for a baseline view of quality

- Targeted monitoring: prioritize calls from customers who complained, high-value orders, or new agents still in training

- 100% automated monitoring: use AI tools that score every call automatically (more on this below)

Most teams start with a mix of random and targeted. If you only have bandwidth for 5 calls per agent, make 2 random and 3 targeted toward complaints or new agents.

4. Run calibration sessions

This step is more important than the scorecard itself. Without calibration, two evaluators will score the same call 20-30% apart, according to Scorebuddy data.

Here's how calibration works. Once a week, everyone who evaluates calls listens to the same recording independently. Then you compare scores and discuss the differences. Where did you disagree? Why? What's the "right" score?

These sessions take 30-45 minutes per week. They're worth every minute because they make your entire QA program reliable.

5. Build a coaching framework

QA without coaching is just surveillance. The whole point of scoring calls is to help agents improve.

A few rules that work:

- Share feedback within 48 hours of the call so agents remember the interaction

- Lead with what went well before discussing what to improve

- Be specific: "You forgot to verify the order number before looking it up" is useful. "You need to be more thorough" is not.

- Use real call recordings in coaching sessions so agents can hear themselves

The best QA programs pair scoring with a coaching plan. Check out our guide on customer service training for e-commerce for specific coaching techniques.

6. Pick your QA tools

Your tool choice depends on your team size and budget:

- Small teams (1-5 agents): a spreadsheet scorecard plus your phone system's call recording is enough to start

- Mid-size teams (5-20 agents): dedicated QA platforms like Scorebuddy (4.5/5 on G2), Playvox (4.8/5 on G2), or MaestroQA make evaluation faster and more consistent

- AI-powered monitoring: tools like Convin.ai (4.7/5 on G2) analyze 100% of calls automatically with sentiment analysis and automated scoring

Here's a stat that should make you think: according to G2, 67% of contact centers still use manual QA. That means most teams are reviewing a tiny fraction of their calls by hand. Call monitoring software can help close that gap.

If you're using AI call center software, you might already have QA features built in. Tools like Ringly.io include call recordings, transcripts, and AI call analysis as standard features, so you can review every interaction without extra software.

See what AI-powered call analysis looks like for your store. Setup takes about three minutes.

7. Review and improve quarterly

Your QA program isn't "set it and forget it." Review it every quarter.

Look at trends, not individual calls. Is your average quality score going up? Are certain criteria consistently low across the team? Has your product lineup changed enough that your scorecard needs updating?

Adjust the scorecard when your business changes. If you launch a subscription service, add criteria for subscription management. If you start offering exchanges instead of just refunds, update your resolution criteria.

How AI is changing call center quality assurance

Traditional QA has a fundamental problem: you can only review the calls you listen to. And for most teams, that's somewhere between 1% and 5% of total volume.

AI flips that equation. With AI-powered QA tools, you can analyze 100% of interactions automatically. The QA software market is growing fast, projected to go from $2.25 billion in 2025 to $4.09 billion by 2032, and most of that growth is in AI-powered solutions.

Here's what AI-powered QA actually does:

- Automated scoring: every call gets scored against your criteria without a human evaluator

- Sentiment analysis: detects customer frustration, confusion, or satisfaction in real time

- Keyword and compliance tracking: flags when agents miss required disclosures or skip steps

- Trend detection: spots patterns across thousands of calls that no manual review could catch

But here's where it gets really interesting for e-commerce teams. If the AI isn't just doing QA but is actually handling the calls, you eliminate most QA problems entirely.

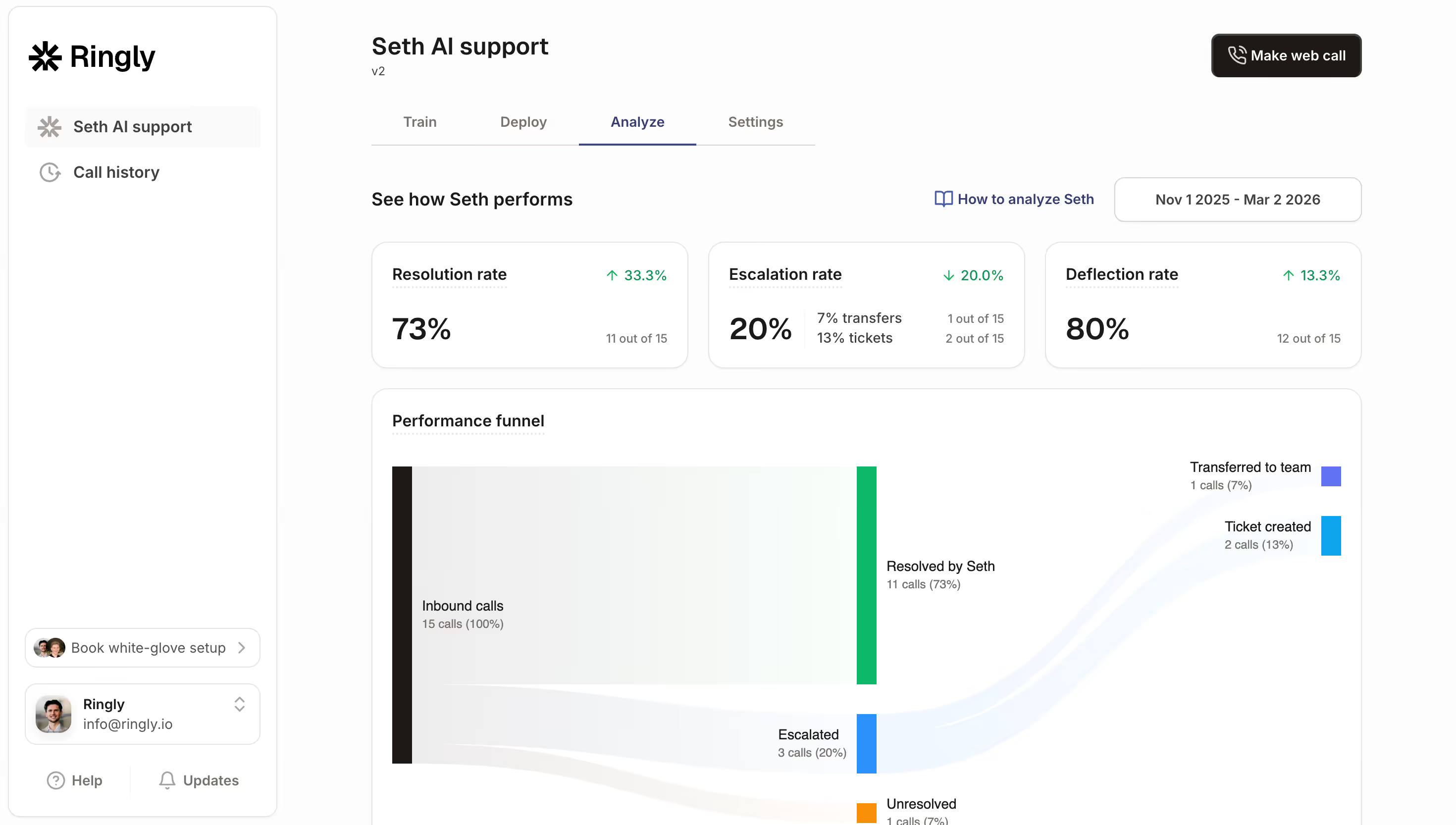

An AI phone agent like Ringly.io's Seth handles calls consistently every time. It always looks up the order before answering. It always follows your return policy. It never has a bad day or forgets a product detail. The resolution rate across 2,100+ Shopify stores is about 73%, and every call is recorded and transcribed for review.

That shifts QA from "are our agents performing well?" to "are our AI agent's responses accurate?" Which is a much simpler question to answer. You review transcripts, check that order lookups are working, and verify policy responses are correct. No scorecards, no calibration sessions, no coaching plans.

If you're curious about how AI is changing call centers more broadly, that post covers the full picture. For the QA angle specifically, AI agents are the biggest shift since call recording was invented.

Want to hear what AI support calls sound like? Try Ringly.io free for 14 days and listen to real call recordings from day one.

Common call center QA mistakes to avoid

Even good QA programs can go sideways. Here are the mistakes we see most often:

- Scoring too many criteria: if your scorecard has 30+ items, evaluators burn out and start rushing through evaluations. Keep it to 15-20 criteria max.

- Treating QA as punishment: if agents only hear from QA when they mess up, they'll learn to game the system. Celebrate good calls, not just flag bad ones.

- Only reviewing complaints: you'll learn what goes wrong, but you'll miss what your top performers do right. Include random sampling alongside targeted reviews.

- Skipping calibration: without regular calibration sessions, your scores are inconsistent and your coaching is based on unreliable data. According to industry research, evaluators can differ by 20-30% without calibration.

- Not connecting QA to business outcomes: a 95% quality score means nothing if your CSAT is dropping. Tie your QA metrics to customer experience statistics that actually matter to the business.

- Reviewing too few calls: 1-2% coverage gives you a distorted picture. If you can't review more manually, look into call center analytics software or AI tools that handle the volume.

The common thread across all these mistakes? QA only works when it's consistent, fair, and connected to actual business results.

Frequently asked questions

What does a call center quality assurance analyst do?

A QA analyst listens to recorded calls, scores them against a predefined scorecard, and identifies coaching opportunities for agents. In e-commerce, they also check that agents follow return policies, provide accurate order information, and use the right tools. Most analysts review 20-40 calls per week.

How many calls should you review per agent per month?

Industry best practice is 5-10 evaluations per agent per month. Small teams (under 50 agents) should target 5-6, while mid-size teams can aim for 8-10. The more important factor is consistency: reviewing 5 calls every month beats reviewing 20 calls once a quarter.

What's the difference between QA and quality management?

QA focuses specifically on evaluating and improving individual interactions (calls, chats, emails). Quality management is broader and includes QA plus workforce management, training programs, process design, and continuous improvement. Think of QA as one piece of the quality management puzzle.

How much does call center QA software cost?

Entry-level QA tools start around $15-25 per user per month. Mid-tier platforms like Scorebuddy or Playvox run $30-60 per user per month. Enterprise solutions with AI-powered analysis can cost $100+ per user per month. Some AI call center software includes QA features in the base price.

Can AI replace manual QA in a call center?

AI can handle the heavy lifting: scoring 100% of calls, detecting sentiment, flagging compliance issues. But most teams still need humans for calibration, coaching conversations, and judging nuance that AI might miss. The best approach in 2026 is AI for coverage and humans for coaching. Or you can skip the whole equation by using an AI phone agent that handles calls consistently from the start.

How do you measure call center quality assurance success?

Track three things: your average quality score (should trend upward over time), your CSAT scores (should correlate with quality improvements), and your first call resolution rate (the most direct measure of whether agents are actually solving problems). A well-run QA program typically improves CSAT by 15-25% within six months.

Build QA into your e-commerce support from day one

You don't need a 50-person call center to run quality assurance. You need a scorecard, a weekly review cadence, and a commitment to coaching over punishment.

Start simple. Pick 10-15 scorecard criteria weighted toward problem resolution. Review 5 calls per agent per week. Hold a 30-minute calibration session every Friday. That's a QA program.

As your team grows, layer in AI-powered tools that analyze every call automatically. Or go further and let an AI agent handle the calls entirely, so quality is built into every interaction by design.

If you run a Shopify store, Ringly.io gets your AI phone agent live in about three minutes, with built-in call recordings and analytics. Start your free 14-day trial and see what consistent, automated support looks like.